About

I am a PhD student at the Hong Kong University of Science and Technology (Guangzhou), advised by Prof. Xu.

I received my bachelor’s degree in Computer Science and Technology from the University of Science and Technology Beijing, where I studied deep learning, computer vision, and reinforcement learning. After joining HKUST (Guangzhou), I shifted my research focus to humanoid robotics and worked as a research assistant for one year. I am now pursuing my PhD with a focus on humanoid whole-body control.

I enjoy working on interesting problems and elegant solutions.

Selected Publications

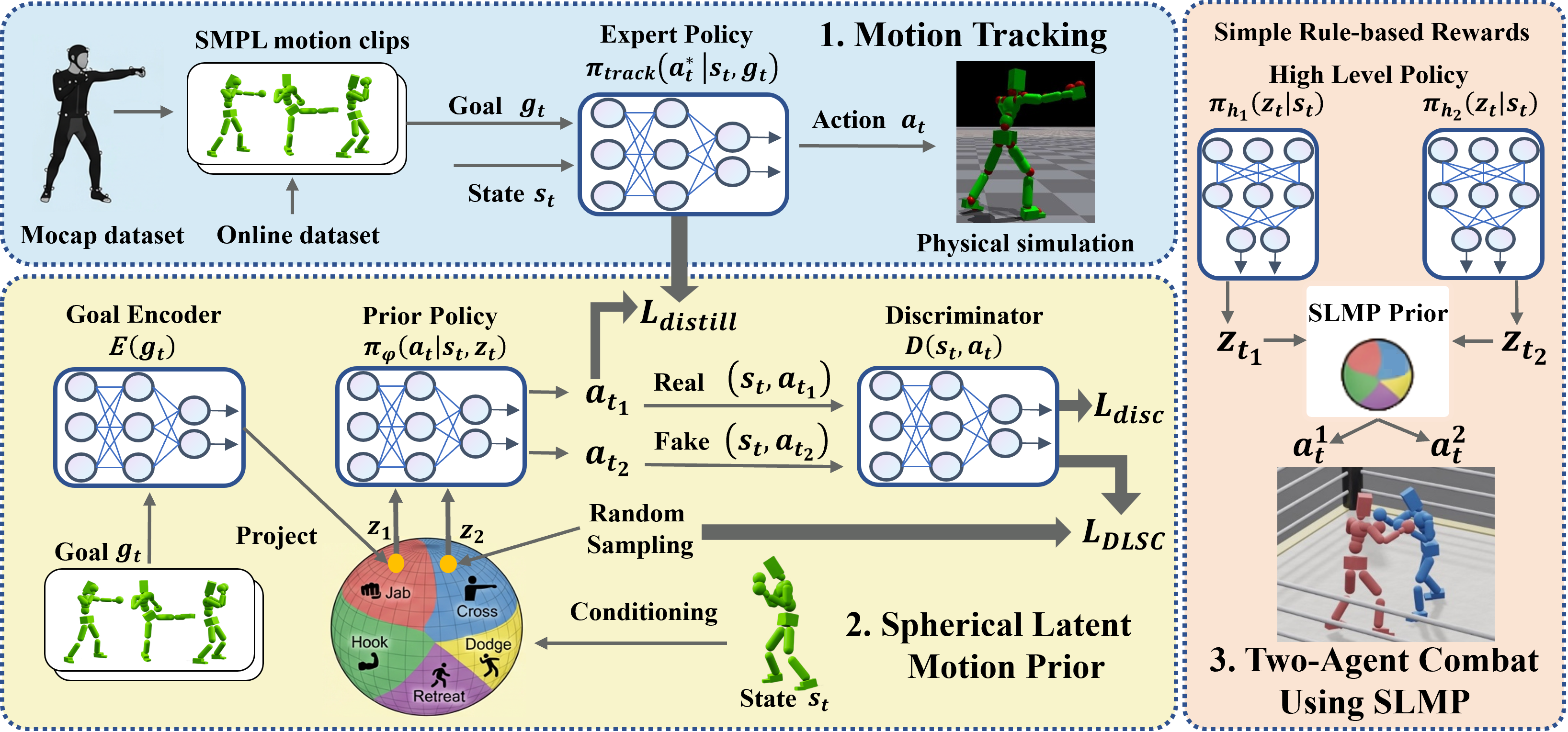

View All →Spherical Latent Motion Prior for Physics-Based Simulated Humanoid Control

Jing Tan, Weisheng Xu, Xiangrui Jiang, Jiaxi Zhang, Kun Yang, Kai Wu, Jiaqi Xiong, Shiting Chen, Yangfan Li, Yixiao Feng, Yuetong Fang, Yujia Zou, Yiqun Song, Renjing Xu

arXiv preprint arXiv:2603.01294

A two-stage method for learning motion priors that distills a high-quality motion tracking controller into a spherical latent space for stable random sampling and diverse behaviors.

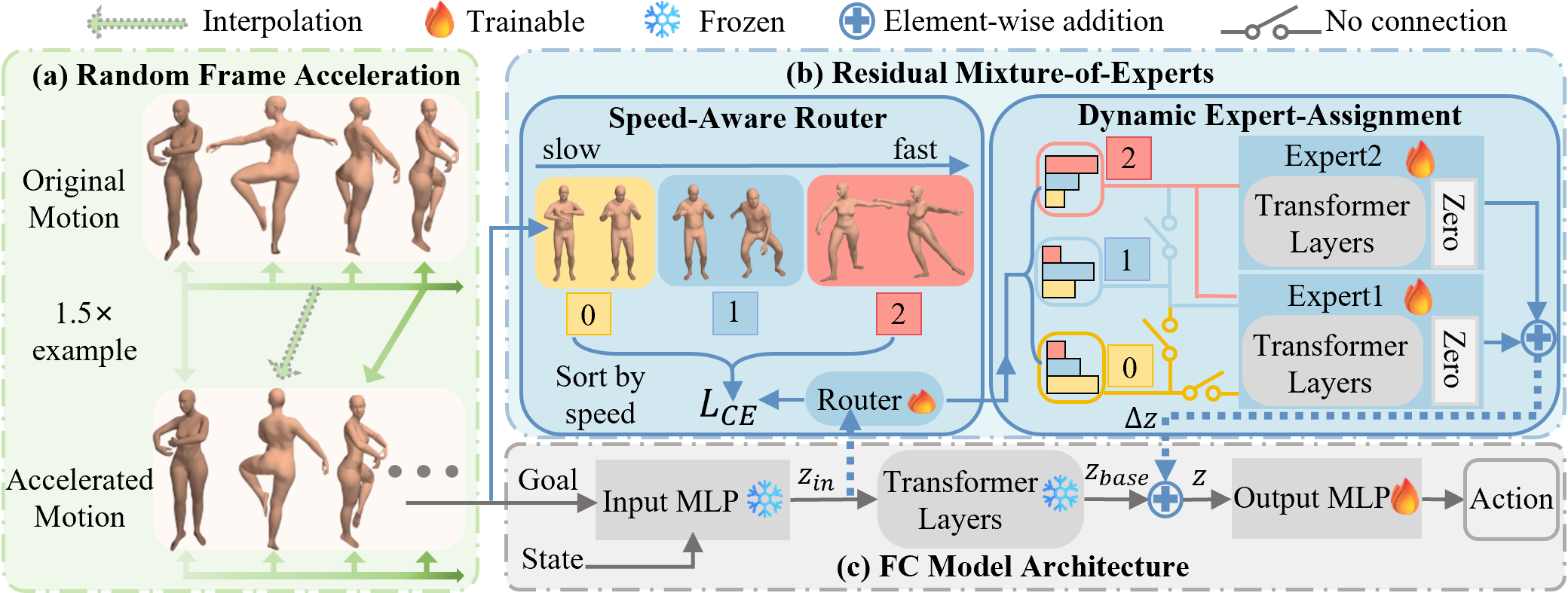

FARM: Frame-Accelerated Augmentation and Residual Mixture-of-Experts for Physics-Based High-Dynamic Humanoid Control

Jing Tan, Shiting Chen, Yangfan Li, Weisheng Xu, Renjing Xu

arXiv preprint arXiv:2508.19926

An end-to-end framework that improves robustness for explosive, high-dynamic humanoid motions via frame-accelerated augmentation and a residual mixture-of-experts controller.

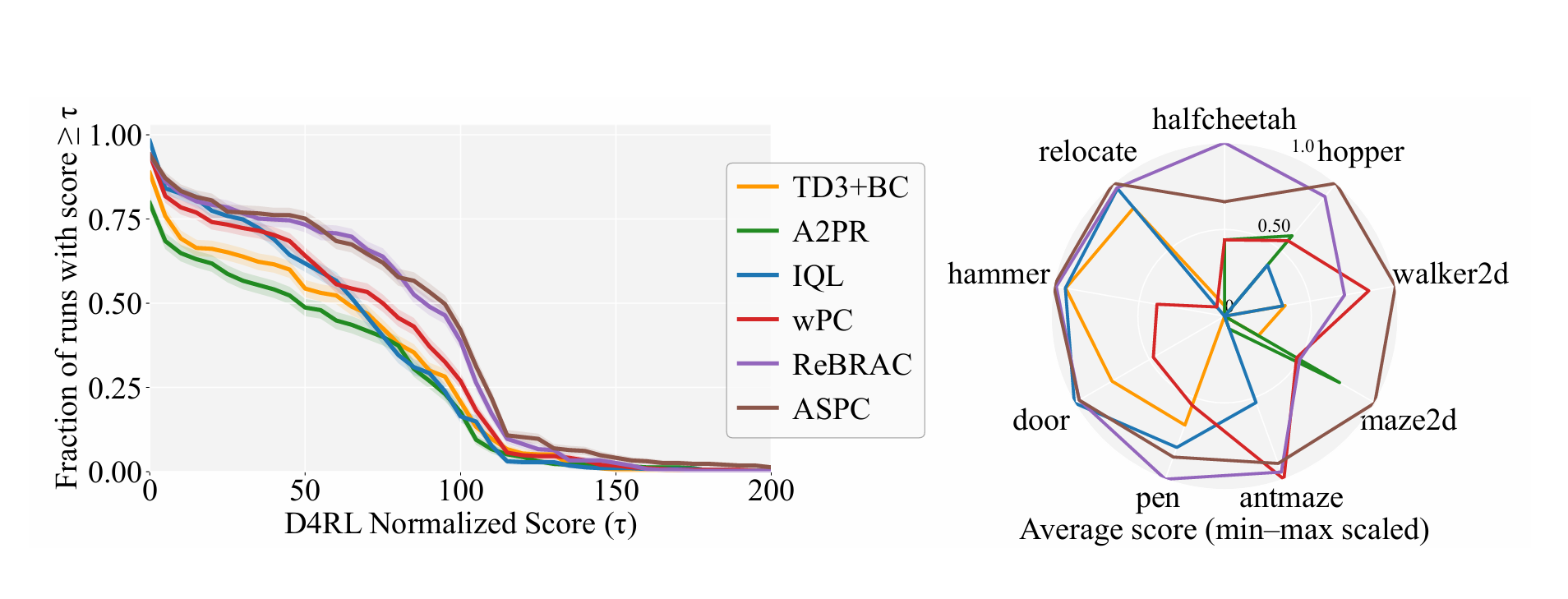

Adaptive Scaling of Policy Constraints for Offline Reinforcement Learning

Jing Tan, Xiaorui Li, Chao Yao, Xiaojuan Ban, Yuetong Fang, Renjing Xu, Zhaolin Yuan

arXiv preprint arXiv:2508.19900

A second-order, bi-level framework that automatically adjusts the policy-constraint scale in offline RL, reducing per-dataset hyperparameter tuning while improving performance.

News

Our paper CLAIMS was accepted to CVPR 2026. 🎉

Our paper ASPC was accepted to ICLR 2026. 🎉

Officially started my PhD at the Hong Kong University of Science and Technology (Guangzhou).

Our paper FARM was accepted to AAAI 2026 as an Oral presentation. 🎉

Publications

A collection of my research work.

Spherical Latent Motion Prior for Physics-Based Simulated Humanoid Control

Jing Tan, Weisheng Xu, Xiangrui Jiang, Jiaxi Zhang, Kun Yang, Kai Wu, Jiaqi Xiong, Shiting Chen, Yangfan Li, Yixiao Feng, Yuetong Fang, Yujia Zou, Yiqun Song, Renjing Xu

arXiv preprint arXiv:2603.01294 2026

A two-stage method for learning motion priors that distills a high-quality motion tracking controller into a spherical latent space for stable random sampling and diverse behaviors.

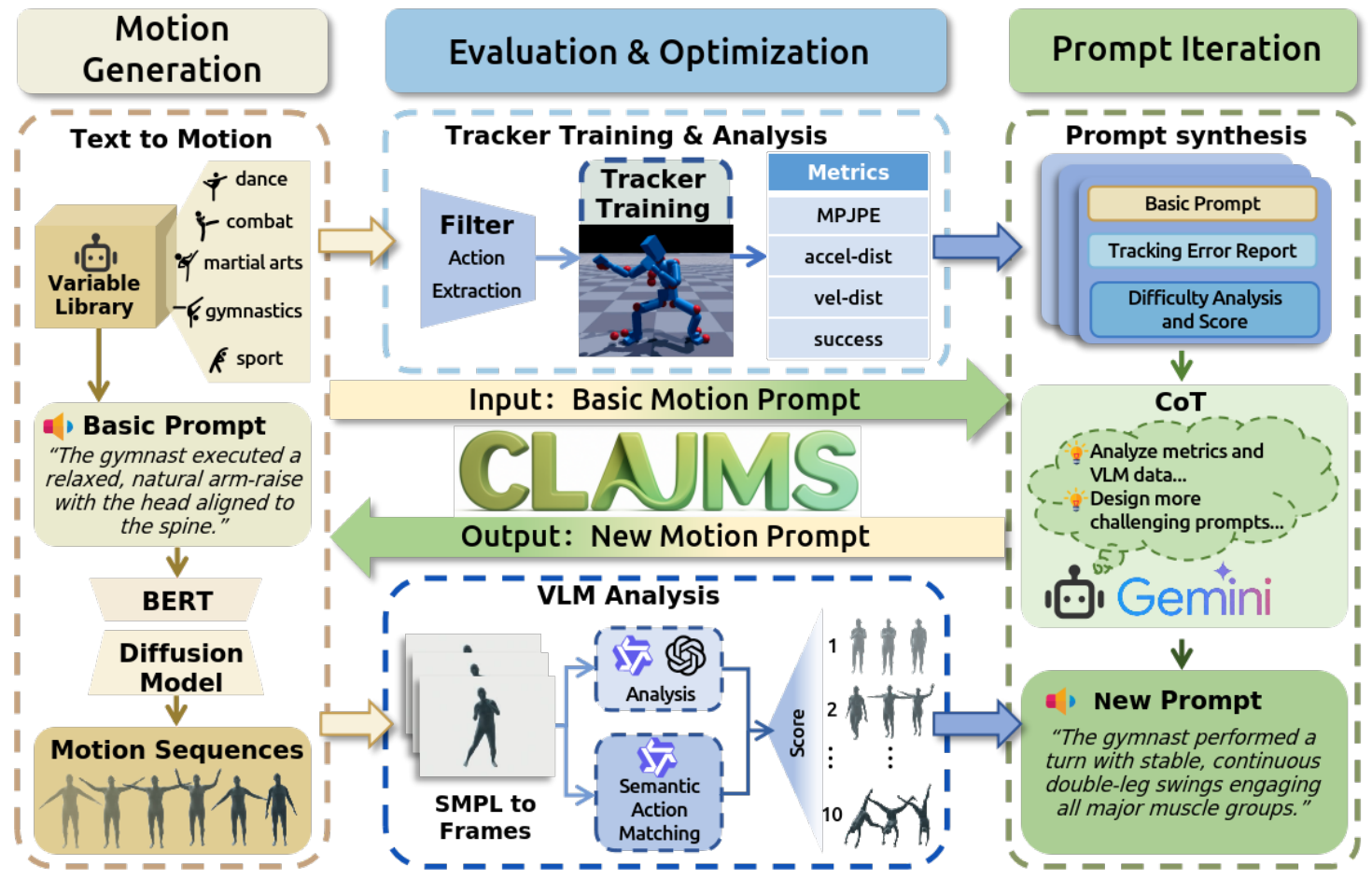

Iterative Closed-Loop Motion Synthesis for Scaling the Capabilities of Humanoid Control

Weisheng Xu, Qiwei Wu, Jiaxi Zhang, Tan Jing, Yangfan Li, Yuetong Fang, Jiaqi Xiong, Kai Wu, Rong Ou, Renjing Xu

arXiv preprint arXiv:2602.21599 2026

A closed-loop automated motion data generation framework that iteratively scales humanoid control capabilities by synthesizing high-quality, diverse motion data and dynamically adjusting difficulty.

FARM: Frame-Accelerated Augmentation and Residual Mixture-of-Experts for Physics-Based High-Dynamic Humanoid Control

Jing Tan, Shiting Chen, Yangfan Li, Weisheng Xu, Renjing Xu

arXiv preprint arXiv:2508.19926 2025

An end-to-end framework that improves robustness for explosive, high-dynamic humanoid motions via frame-accelerated augmentation and a residual mixture-of-experts controller.

Adaptive Scaling of Policy Constraints for Offline Reinforcement Learning

Jing Tan, Xiaorui Li, Chao Yao, Xiaojuan Ban, Yuetong Fang, Renjing Xu, Zhaolin Yuan

arXiv preprint arXiv:2508.19900 2025

A second-order, bi-level framework that automatically adjusts the policy-constraint scale in offline RL, reducing per-dataset hyperparameter tuning while improving performance.

Services

Academic service and community involvement.

Conference Reviewer

2026IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)

Reviewer for CVPR 2026.